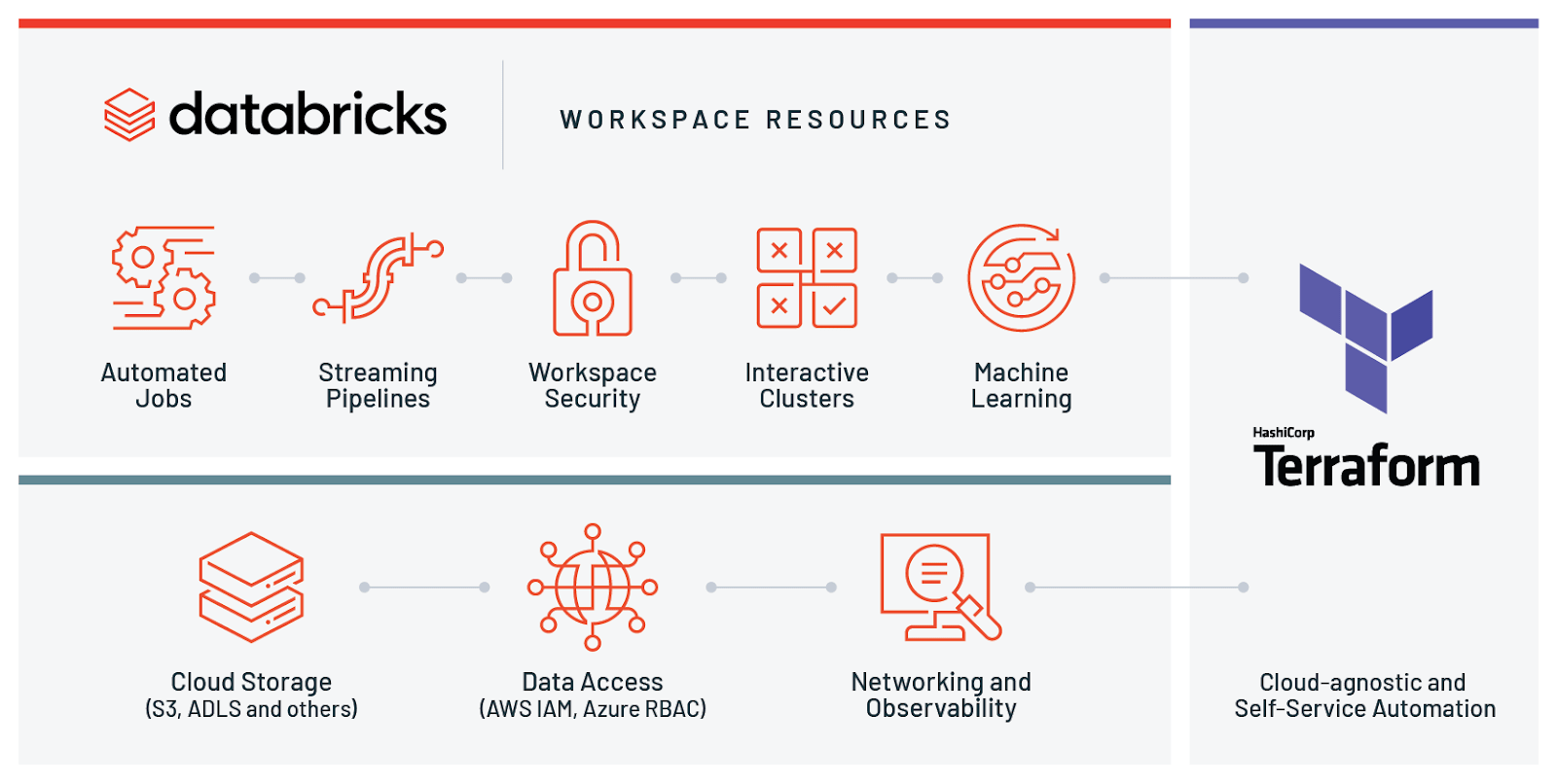

However, the data we were using resided in Azure Data Lake Gen2, so we needed to connect the cluster to ADLS. This is installed by default on Databricks clusters, and can be run in all Databricks notebooks as you would in Jupyter. As I've mentioned, the existing ETL notebook we were using was using the Pandas library. You can either upload existing Jupyter notebooks and run them via Databricks, or start from scratch. Once the Databricks connection is set up, you will be able to access any Notebooks in the workspace of that account and run these as a pipeline activity on your specified cluster. However, you pay for the amount of time that a cluster is running, so leaving an interactive cluster running between jobs will incur a cost. However, if you use an interactive cluster with a very short auto-shut-down time, then the same one can be reused for each notebook and then shut down when the pipeline ends. Using job clusters, one would be spun up for each notebook. This is also an excellent option if you are running multiple notebooks within the same pipeline. These can be configured to shut down after a certain time of inactivity.

An interactive cluster is a pre-existing cluster. Therefore, if performance is a concern it may be better to use an interactive cluster. It should be noted that cluster spin up times are not insignificant - we measured them at around 4 minutes. If you choose job cluster, a new cluster will be spun up for each time you use the connection (i.e. Here you choose whether you want to use a job cluster or an existing interactive cluster. By choosing compute, and then Databricks, you are taken through to this screen: To start with, you create a new connection in ADF.

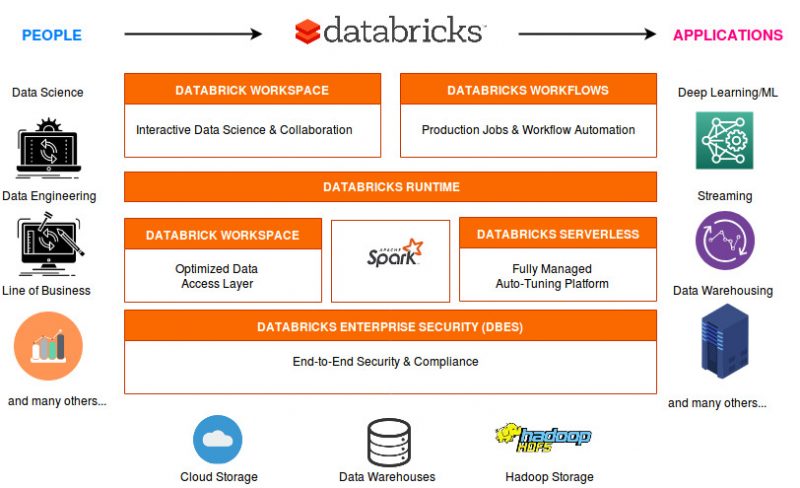

This means that you can build up data processes and models using a language you feel comfortable with. NET!), though only Scala, Python and R are currently built into Notebooks. It allows you to run data analysis workloads, and can be accessed via many APIs (Scala, Java, Python, R, SQL, and now. Databricks is built on Spark, which is a "unified analytics engine for big data and machine learning". This notebook could then be run as an activity in a ADF pipeline, and combined with Mapping Data Flows to build up a complex ETL process which can be run via ADF. As part of the same project, we also ported some of an existing ETL Jupyter notebook, written using the Python Pandas library, into a Databricks Notebook. I recently wrote a blog on using ADF Mapping Data Flow for data manipulation. To focus on a particular stage, switch to the Stages tab.By Carmel Eve Software Engineer I 10th May 2019 In the list of the application jobs, select a job to preview. Refer to Spark documentation for more information about types of data. You can also preview info on Tasks, units of work that sent to one executor. SQL: specific details about SQL queries execution. Job: a parallel computation consisting of multiple tasks.Įnvironment: runtime information and Spark server properties.Įxecutor: a process launched for an application that runs tasks and keeps data in memory or disk storage across them. The window consists of the several areas to monitor data for:Īpplication: a user application is being executed on Spark. Once you have established a connection to the Spark server, the Spark monitoring tool window appears. Go to the Tools | Big Data Tools Settings page of the IDE settings Control+Alt+S.Ĭlick on the Spark monitoring tool window toolbar. Then click OK.Īt any time, you can open the connection settings in one of the following ways: Once you fill in the settings, click Test connection to ensure that all configuration parameters are correct. Proxy: select if you want to use IDE proxy settings or if you want to specify custom proxy settings. to create a new SSH configuration).Įnable HTTP basic authentication: connection with the HTTP authentication using the specified username and password. Select the checkbox and specify a configuration of an SSH connection (click. It can be useful if the target server is in a private network but an SSH connection to the host in the network is available. By default, the newly created connections are enabled.Įnable tunneling: creates an SSH tunnel to the remote host. Name: the name of the connection to distinguish it between the other connections.Įnable connection: deselect if you want to disable this connection. In the Big Data Tools dialog that opens, specify the connection parameters:

In the Big Data Tools window, click and select Spark. With the Big Data Tools plugin, you can monitor your Spark jobs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed